The Prompt Is the Program

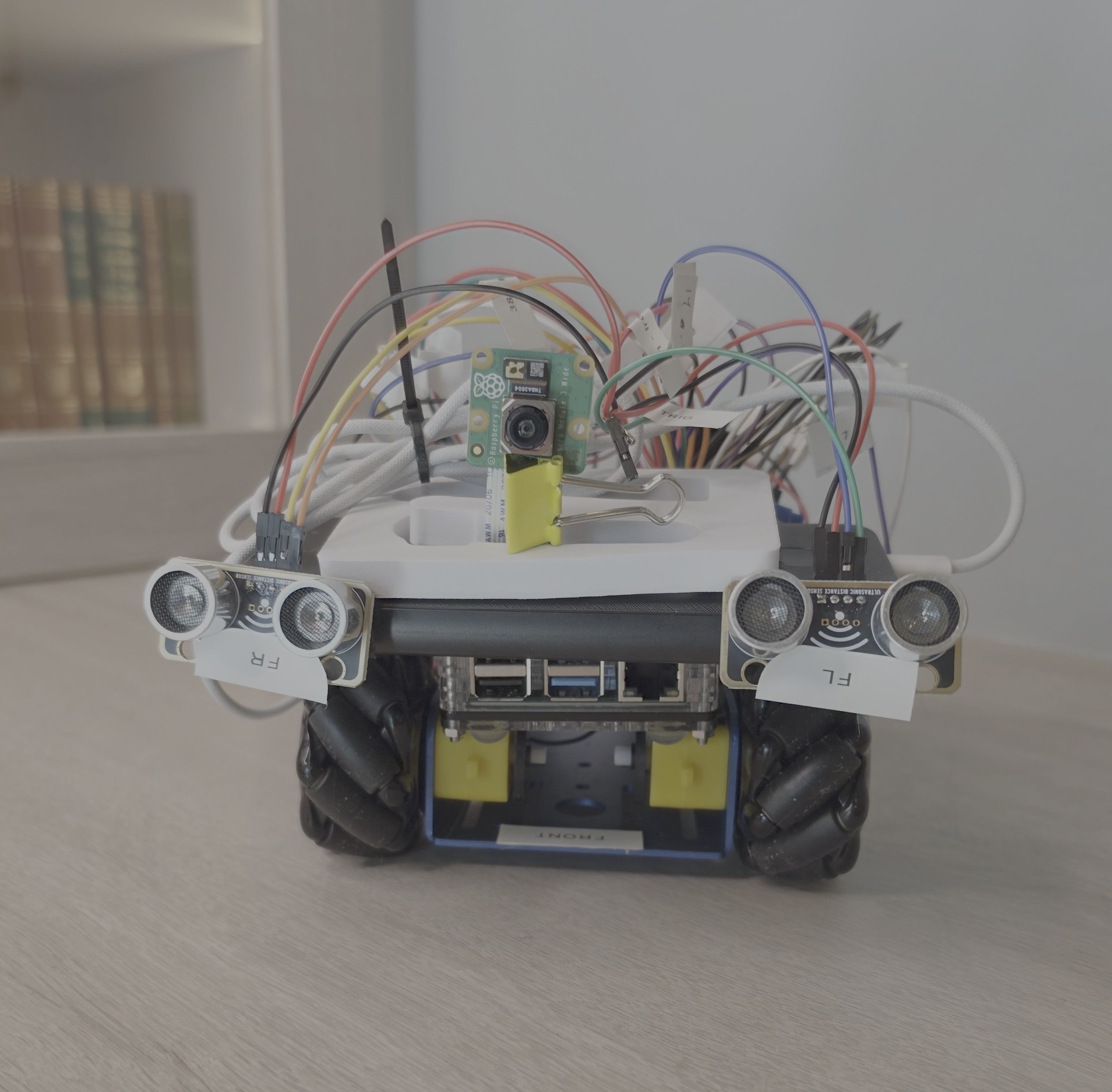

⚡ TL;DR Built a robot car controlled entirely by an LLM (Qwen3.5-27B on an RTX 5090) — no traditional control code The AI sees through a camera, reads ultrasonic sensors, and issues direct motor commands every ~1.3 seconds It follows me through the house autonomously for 15+ minute sessions — and has crashed into a plant pot twice Key insight: qualitative reasoning goes to the AI, quantitative enforcement goes to traditional code The operating system is optimized for a user that isn’t there anymore Operating systems were built for humans. What happens when the user is an AI? I built a robot car to find out. ...